To Share is to Show You Care!

Artificial Intelligence (AI) has become an integral part of our daily lives, revolutionizing industries and offering numerous benefits. However, as AI technology continues to advance, it brings forth a myriad of ethical concerns. In this blog post, we will explore the key ethical dilemmas associated with AI in 2023 and provide you with actionable solutions to ensure that AI uplifts humanity rather than hinders it.

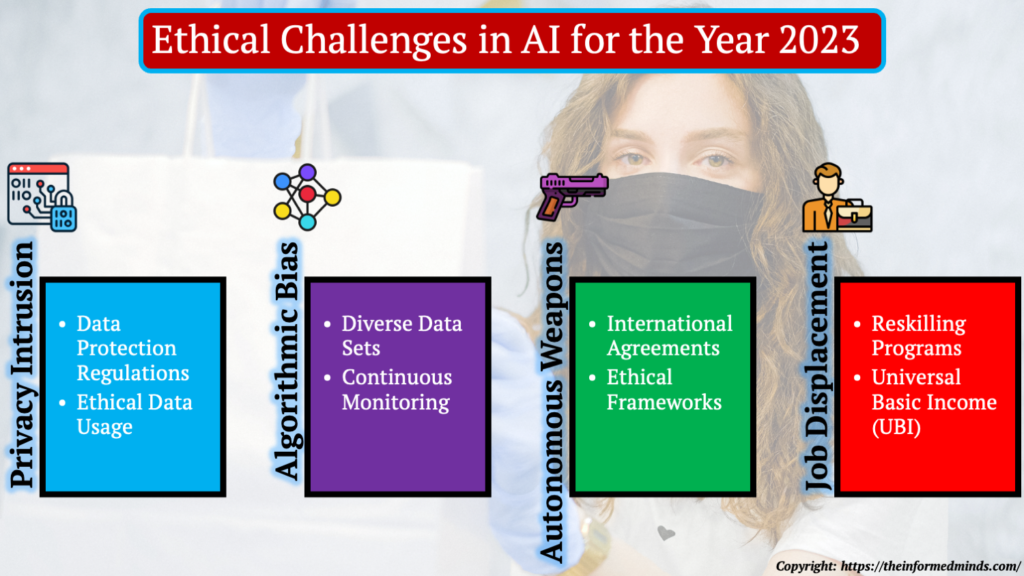

1. Ethical Dilemmas in AI in 2023

1.1 Privacy Intrusion

1.1.1 Data Protection Regulations

Definition: Data protection regulations are legal frameworks that govern the collection, processing, storage, and sharing of personal data. They are designed to protect individuals’ privacy and provide guidelines for how organizations and governments can handle personal information.

Purpose: These regulations aim to ensure that personal data is handled responsibly and that individuals have control over their information. They require organizations to be transparent about their data practices, obtain consent from individuals for data processing, and put security measures in place to prevent data breaches.

Examples: GDPR (General Data Protection Regulation) in the European Union, CCPA (California Consumer Privacy Act) in California, and HIPAA (Health Insurance Portability and Accountability Act) in the healthcare sector are examples of data protection regulations.

1.1.2 Ethical Data Usage

Definition: Ethical data usage refers to the responsible and transparent handling of personal data. It involves obtaining informed consent, providing clear information about data collection and usage, and ensuring data security.

Purpose: Ethical data usage is critical to protecting individuals’ privacy. It ensures that data is collected with the consent of the individuals involved, that it is used only for the purposes specified, and that it is protected from unauthorized access or misuse.

Examples: Ethical data usage can be seen in practices where organizations clearly inform users about data collection, use encryption and security measures to safeguard data, and allow users to manage their data preferences, such as opting out of data collection.

1.2 Algorithmic Bias

1.2.1 Diverse Data Sets

Definition: Diverse data sets refer to the inclusion of data from a wide range of demographic, cultural, and socioeconomic groups in the training data used to develop AI algorithms.

Purpose: Including diverse data is vital to reduce bias in AI algorithms. When AI systems are trained on a representative dataset, they are less likely to favor or discriminate against certain groups. This results in fairer and more accurate outcomes.

Examples: To reduce bias, AI developers ensure that training data includes data from various ethnicities, genders, age groups, and backgrounds. For example, when training facial recognition technology, it’s essential to have a diverse set of faces to avoid biases against certain groups.

1.2.2 Continuous Monitoring

Definition: Continuous monitoring involves regularly assessing the outputs and performance of AI systems to detect and correct bias or any other issues that may arise over time.

Purpose: Bias in AI can emerge as systems evolve and encounter new data. Continuous monitoring ensures that developers can identify and address bias or errors as soon as they occur, maintaining the fairness and accuracy of the AI.

Examples: Continuous monitoring may involve periodic audits of AI systems, evaluating the accuracy of algorithmic decisions, and using specialized tools that can identify bias in real-time, allowing for prompt correction.

1.3 Autonomous Weapons

1.3.1 International Agreements

Definition: International agreements are treaties or pacts between countries or organizations that establish rules, standards, and guidelines for the responsible use of technology, including AI in weaponry.

Purpose: International agreements aim to prevent the development and deployment of autonomous weapons that could pose a threat to humanity. They emphasize the importance of maintaining human control over lethal decision-making in warfare.

Examples: Agreements like the Convention on Certain Conventional Weapons (CCW) and the Campaign to Stop Killer Robots are efforts to establish international consensus on banning or limiting the use of fully autonomous weapons.

1.3.2 Ethical Frameworks

Definition: Ethical frameworks are sets of principles and guidelines that AI developers and organizations adopt to ensure that their technology is developed and used ethically and responsibly.

Purpose: These frameworks are designed to steer AI development in a direction that prioritizes safety and human well-being, while avoiding harmful applications.

Examples: Ethical frameworks can include guidelines such as a commitment to not developing AI for use in harmful or unethical purposes, a dedication to transparency in AI development, and a focus on safety measures to prevent accidents or misuse.

1.4 Job Displacement

1.4.1 Reskilling Programs

Definition: Reskilling programs are initiatives that provide training and education to individuals whose jobs may be displaced by automation, including AI. These programs equip workers with new skills to transition into different roles or industries.

Purpose: The purpose of reskilling programs is to mitigate the negative impact of job displacement by empowering workers to adapt to the changing job market. It helps ensure that individuals remain employable and can find new opportunities.

Examples: Many governments and companies offer reskilling programs in sectors where job displacement is likely, such as manufacturing, where workers can learn new skills like coding, data analysis, or healthcare-related skills.

1.4.2 Universal Basic Income (UBI)

Definition: Universal Basic Income (UBI) is a social policy that provides all citizens with a regular, unconditional cash payment from the government, regardless of their employment status.

Purpose: UBI aims to provide individuals with a financial safety net, particularly those who may lose their jobs due to automation or AI-driven job displacement. It ensures that basic needs are met, promoting economic security.

Examples: Several countries and regions have experimented with or implemented UBI programs, including Finland, Canada, and some U.S. cities, to assess the impact of this policy on income equality and social well-being.

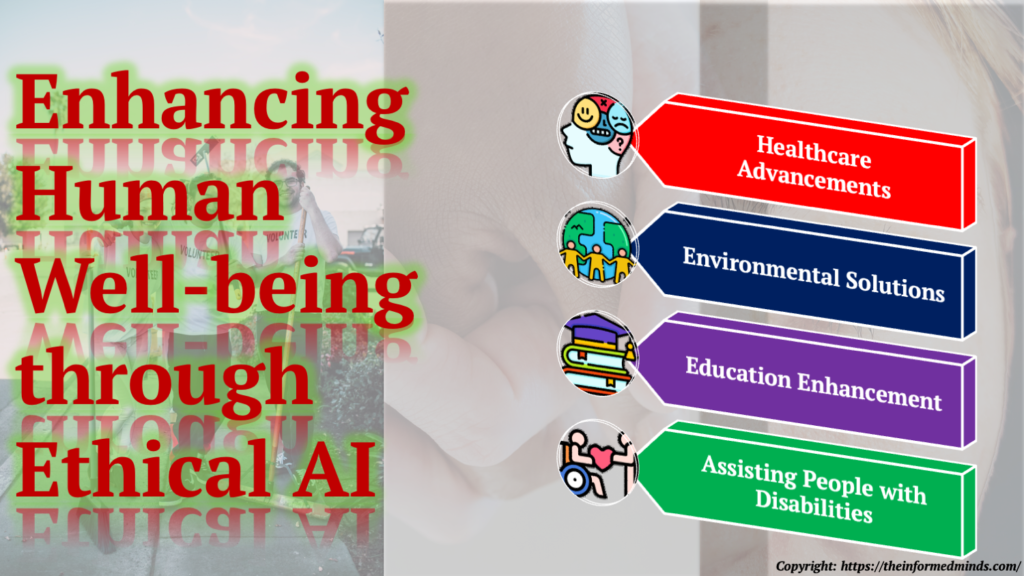

2. Uplifting Humanity with Ethical AI

2.1 Healthcare Advancements

AI has the potential to significantly improve healthcare. By analyzing large datasets and assisting in diagnostics, AI can enhance the accuracy of medical diagnoses, making healthcare more accessible and efficient. Additionally, AI can predict disease outbreaks and optimize treatment plans.

2.2 Environmental Solutions

AI can be harnessed to address pressing environmental challenges, such as climate change and conservation efforts. AI-powered data analysis can help monitor climate patterns, predict natural disasters, and optimize resource management for sustainability.

2.3 Education Enhancement

AI can revolutionize education by offering personalized learning experiences. AI-driven platforms can adapt to students’ individual needs, providing tailored content and support, thereby making education more effective and inclusive.

2.4 Assisting People with Disabilities

AI-driven technology has the potential to enhance the lives of people with disabilities. Assistive technologies, such as speech recognition and mobility aids, can empower individuals with disabilities by increasing their independence and accessibility.

Conclusion

By understanding and implementing these solutions to ethical dilemmas in AI, we can better harness the potential of AI to uplift humanity while minimizing its negative impact. These strategies and approaches are critical for ensuring that AI technologies are developed and used in ways that are ethical, responsible, and beneficial to society.

Frequently Asked Questions

Q1. How can we overcome ethical issues of AI?

A: Ethical issues in AI can be overcome through a combination of regulatory frameworks, diverse and representative data, ongoing monitoring for bias, and promoting responsible AI development practices.

Q2. What is the future of ethical AI?

A: The future of ethical AI involves continued efforts to ensure that AI technologies are developed and used in ways that prioritize fairness, transparency, accountability, and human well-being. This includes advancing ethical guidelines and regulations.

Q3. What are the three potential solutions to the ethical dilemma?

A: Three potential solutions to ethical dilemmas in AI are: 1) Implementing data protection regulations, 2) Ensuring diverse data sets for training, and 3) Promoting international agreements on AI use in areas like autonomous weapons.

Q4. What are some ethical dilemmas confronting the robots?

A: Ethical dilemmas for robots include issues related to decision-making, moral responsibility, and the potential consequences of their actions in various contexts, such as autonomous vehicles and caregiving robots.

Q5. What are the ethical dilemmas behind AI?

A: Ethical dilemmas in AI include concerns about privacy intrusion, algorithmic bias, job displacement, and the development of autonomous weapons, as well as the responsible use of AI in various applications.

Q6. How do you promote ethical AI?

A: Ethical AI is promoted through the adoption of ethical frameworks, regulations, and guidelines, along with responsible data collection and usage, diverse training data, continuous monitoring for bias, and transparency in AI development.

Q7. What is the impact of AI in 2023?

A: In 2023, the impact of AI includes advancements in healthcare, environmental solutions, education enhancement, and improvements for people with disabilities. It also involves addressing ethical concerns surrounding AI.

Q8. What is the future of AI in 2023?

A: The future of AI in 2023 is characterized by continued growth and innovation in fields like machine learning, robotics, and natural language processing, with AI playing a prominent role in various industries.

Q9. How will AI change the future in 2023?

A: AI in 2023 will change the future by driving automation, enhancing decision-making processes, and revolutionizing industries such as healthcare, education, and environmental conservation.

Q10. What is the best way to avoid ethical dilemmas?

A: The best way to avoid ethical dilemmas in AI is by proactively addressing potential issues, following ethical guidelines, and ensuring transparency and responsible data usage throughout the development and deployment of AI systems.

Q11. What are the six steps to resolving an ethical dilemma?

A: The six steps to resolving an ethical dilemma typically involve recognizing the dilemma, gathering information, evaluating options, making a decision, taking action, and reflecting on the outcome.

Q12. What are the 4 approaches to solving ethical dilemmas?

A: The four common approaches to solving ethical dilemmas include the utilitarian approach, the deontological approach, the virtue ethics approach, and the rights-based approach.

Q13. What are the two AI ethics?

A: The two primary AI ethics are the ethics of AI development, focusing on how AI is created, and the ethics of AI deployment, addressing how AI is used and the impacts it has on society.

Q14. What is the ethical dilemma we face on AI and autonomous technology?

A: The ethical dilemma surrounding AI and autonomous technology involves concerns about the responsible use of AI in areas like autonomous vehicles, drones, and weaponry, as well as the potential loss of human control in critical decisions.

Q15. What are the main issues with artificial intelligence and robotics?

A: The main issues with artificial intelligence and robotics include ethical dilemmas, such as privacy intrusion and algorithmic bias, as well as concerns about job displacement, safety, and the impact of AI and robotics on society.

The Informed Minds

I'm Vijay Kumar, a consultant with 20+ years of experience specializing in Home, Lifestyle, and Technology. From DIY and Home Improvement to Interior Design and Personal Finance, I've worked with diverse clients, offering tailored solutions to their needs. Through this blog, I share my expertise, providing valuable insights and practical advice for free. Together, let's make our homes better and embrace the latest in lifestyle and technology for a brighter future.